AI Has a Power Problem – And It’s Getting Worse

Contents

AI is transitioning from a software-driven industry to a power-constrained infrastructure system. As demand for compute explodes, electricity availability, grid capacity, and energy strategy are emerging as the key determinants of AI growth, and of national competitiveness. The companies and countries which control reliable, abundant power will increasingly control the future of AI.

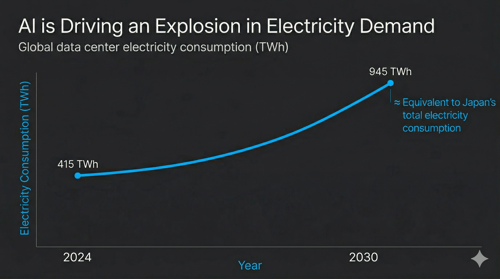

Did you know? The entire nation of Japan (125 million people, bullet trains, neon cities, steel mills, etc.) consumes roughly 950 TWh of electricity each year. By 2030, global data centers will consume roughly the same amount. And AI will be the primary reason why.

If you are in the US and wondering why your electricity bill has crept up recently, part of the explanation may be sitting in a server farm in northern Virginia, drawing more power than the city of Boston, around the clock, every day of the year.

1. THE SCALE OF AI'S POWER DEMAND

Global data center electricity consumption stood at around 415 TWh in 2024, roughly 1.5% of all electricity generated worldwide. By 2030, that figure is projected to nearly double to 945 TWh, growing at four times the rate of every other sector combined. In the US alone, data centers are on course to account for almost half of all electricity demand growth by the end of this decade.

To put that in perspective: US data centers consumed 183 TWh in 2024, roughly equivalent to the combined annual electricity demand of the Netherlands, Portugal, and New Zealand. By 2030, that figure is projected to reach 426 TWh.

This is not a temporary surge driven by a single wave of model training. It is structural, and it is accelerating.

2. WHY AI IS SO ENERGY-HUNGRY

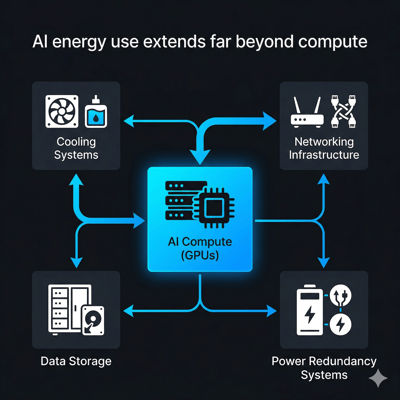

The core reason is architectural, which in turn influences the operational aspect of AI. It is estimated that a single ChatGPT query consumes roughly 1000 times more electricity than a Google search. AI-optimized server racks require 40 to 60 kW of power, compared to 5 to 15 kW for traditional racks. At the scale of a modern hyperscale data center, those numbers compound rapidly.

Training large models gets the headlines, but inference (running AI systems in production, continuously, at scale) accounts for an estimated 80 to 90% of total AI compute and generally flies under the radar. Every time a user interacts with an AI product, a recommendation engine fires, or an enterprise workflow runs a model, electricity is consumed. There is no off switch, and this is why energy demand from AI is not a spike, but a baseline which keeps rising.

3. THE GLOBAL INFRASTRUCTURE RACE

The United States currently accounts for roughly 44% of global data center power consumption, a share it is projected to maintain through 2030. In 2024, Amazon, Microsoft, Google, and Meta collectively spent over $200 Billion on capital expenditure (a 62% y-o-y increase) with AI infrastructure as the primary driver.

China holds approximately 25% of global data center power consumption, growing rapidly under state-led coordination. Europe is expanding but is constrained by grid congestion and slower permitting. The Gulf states are moving fast, backed by sovereign capital and low energy costs. Although, as discussed below, their geography now carries a new category of risk.

This race is no longer just about chips or algorithms but is instead becoming about who can build and power the infrastructure at scale.

4. THE GRID IS FEELING THE PRESSURE

In July 2024, a voltage fluctuation in northern Virginia caused 60 data centers to disconnect simultaneously, creating a 1500 MW power surplus and nearly triggering cascading grid failures. While it was an accident, it was also a warning to be prepared for any similar future events.

The cost pressure is real and already reaching consumers. In the US, in the PJM electricity market covering Illinois to North Carolina, data centers drove an estimated $9.3 Billion increase in capacity market prices for 2025 to 2026. Residential bills in parts of Maryland and Ohio are expected to rise by $16 to $18 a month as a result. In areas with heavy data center activity, wholesale electricity costs have risen as much as 267% over five years.

Regulators are responding to all this, with Texas having passed grid reform legislation in mid-2025 and Virginia's Dominion Energy proposed its first base-rate increase since 1992. The policy debate is now about who should pay for the energy grid and not about whether AI is straining it. Major U.S. tech companies (including Amazon, Google, Meta, Microsoft, OpenAI, Oracle, and xAI) signed a White House-backed "Ratepayer Protection Pledge" in March 2026 to fund the electricity infrastructure for their AI data centers. This ensures tech firms pay for power upgrades, rather than passing costs to households, amid growing electricity demands.

5. THE NUCLEAR COMEBACK

Faced with insatiable, round-the-clock power demand, the world's largest technology companies are doing something remarkable: they are becoming energy companies.

Microsoft has signed a 20-year agreement to restart Three Mile Island, shuttered since 2019, to power its data centers. Google has contracted 500 MW from Kairos Power's small modular reactors and committed capital to three additional US reactor sites totaling 1.8 GW. Amazon is targeting 5 GW of nuclear capacity by 2040. Across 2025, Meta, Amazon, Google, and Microsoft have signed nearly half of all clean energy deals globally. Excess power will be sold to the population living nearby.

The logic is straightforward. Wind and solar cannot provide the 24/7 baseload power that AI infrastructure requires, however, nuclear can. That which had become a symbol of nuclear fear is now a symbol of something else entirely: the lengths to which the AI industry will go to secure its power supply.

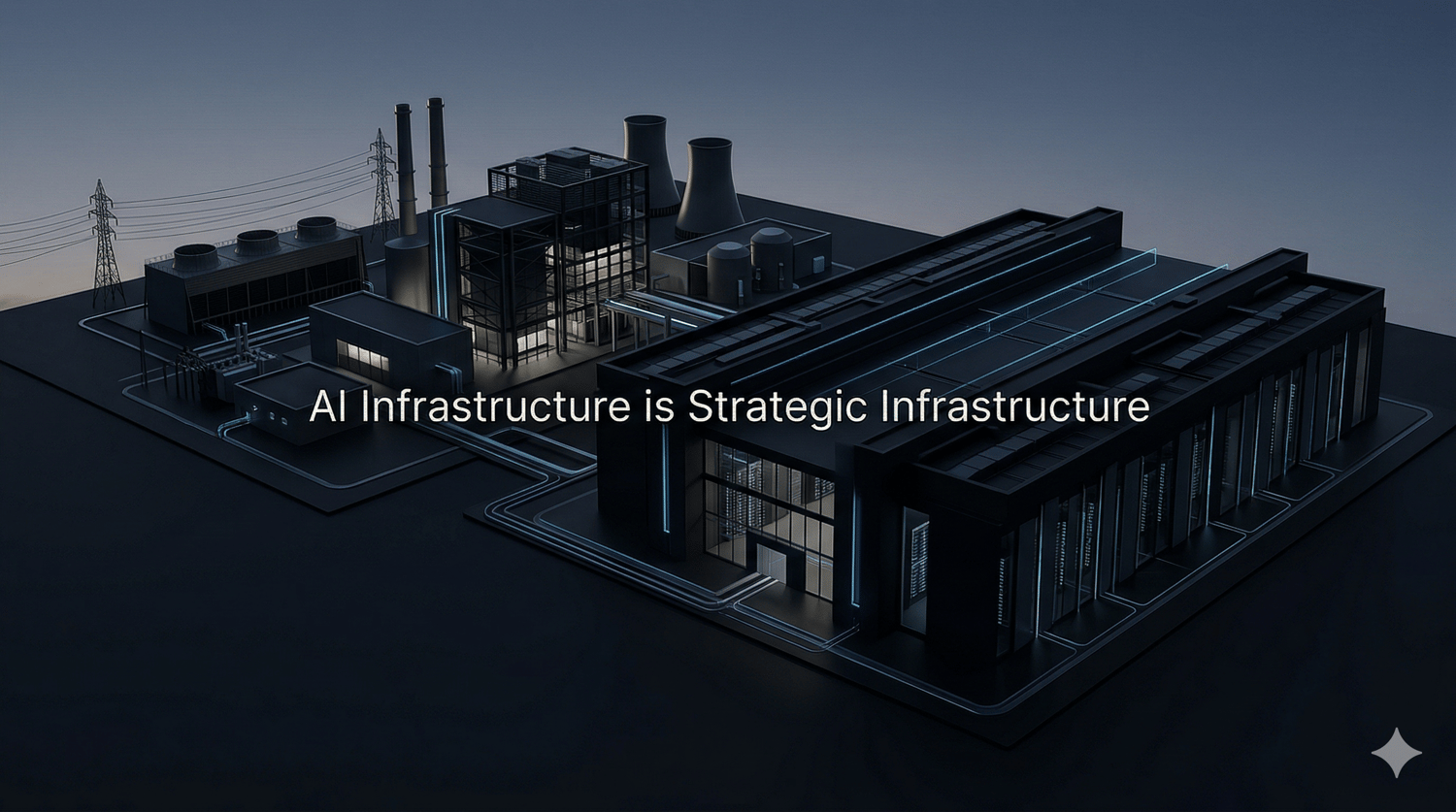

6. AI INFRASTRUCTURE AS STRATEGIC ASSET

The recent Anthropic-Pentagon standoff, which came to a head in February 2026, made something explicit that had been implicit for years: AI models and the infrastructure that runs them are now contested strategic assets.

Anthropic held a Pentagon contract worth up to $200 Million, deploying its Claude models in classified systems. When it refused to permit unrestricted use for domestic surveillance and autonomous weapons, the Defense Department designated it a supply chain risk. Within hours of the fallout, OpenAI announced its own classified deployment deal with the Pentagon, claiming similar ethical limits, while critics questioned whether the contract language enforced them meaningfully.

The episode revealed a fault line which will only widen, that governments want unrestricted access to AI capability; AI companies are being forced to define where they draw the line. The infrastructure which runs these models (data centers, cloud platforms, power grids) is no longer a neutral ground, but a site of political and military contestation.

7. WHEN DATA CENTERS BECOME TARGETS

On 1st March 2026, Iran struck AWS data centers in the UAE and Bahrain using their Shahed-136 drones, taking down a few availability zones simultaneously and knocking out digital services across the Gulf. Reportedly, the IRGC stated such facilities were legitimate targets because US military AI systems were being hosted and run there. It was the first confirmed military strike on hyperscale AI infrastructure.

The attack has exposed a structural vulnerability in how AI infrastructure has been built: concentrated in a small number of regions, tightly integrated with military systems, and as it turns out, within range of adversaries willing to treat server farms as strategic targets. The Stargate UAE campus, a 5 GW AI facility under construction in Abu Dhabi under a US-UAE partnership, now sits in a region where that risk has been proven.

The question of where to build AI infrastructure has acquired a new dimension, with geography no longer being just about land costs and latency, but offering security as well.

8. INDIA: THE EMERGING CASE

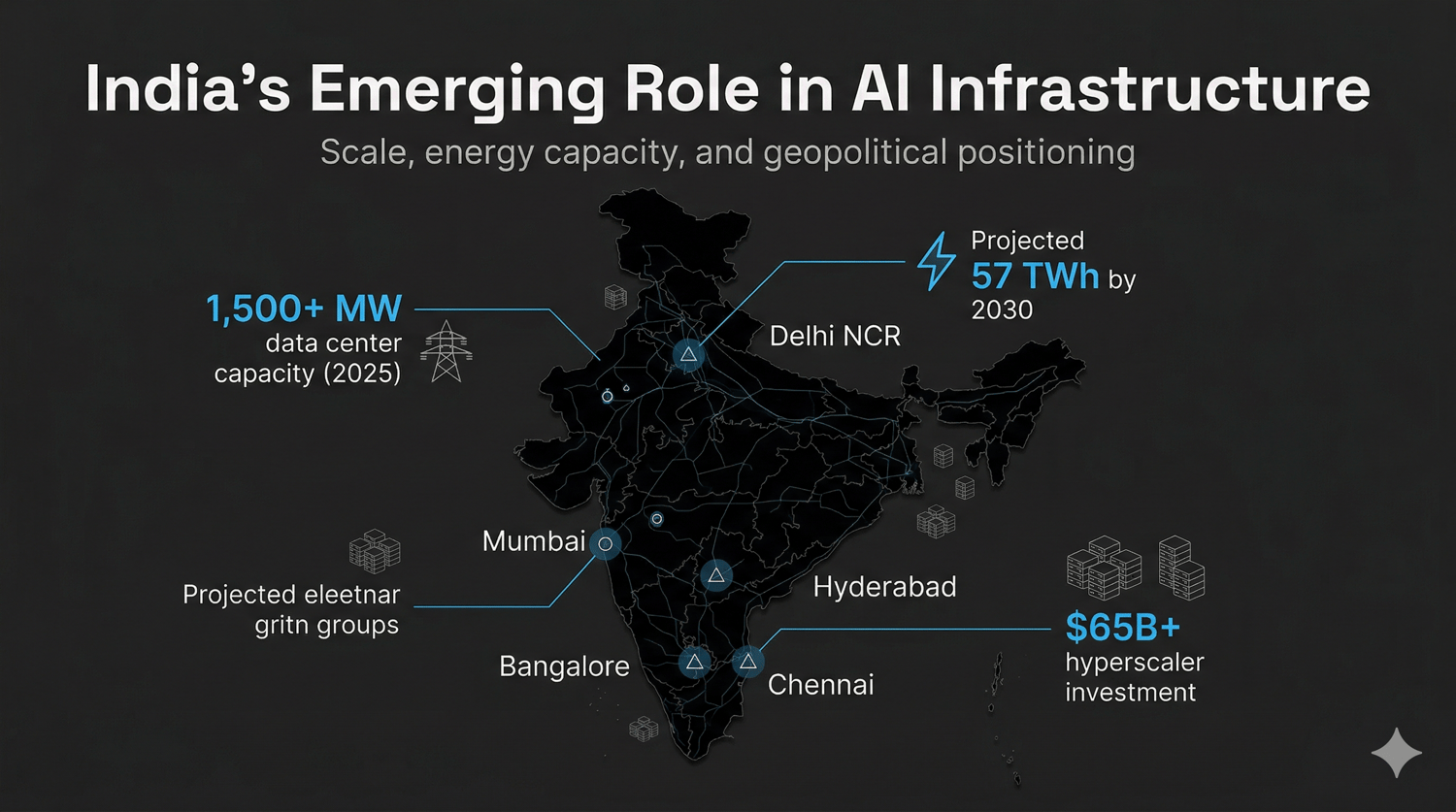

In all of this, India is making a compelling argument for itself as the next major AI infrastructure hub, with the argument stretching beyond economics.

The numbers coming out are strong. Data center capacity has grown from 375 MW in 2020 to over 1500 MW in 2025, with electricity consumption projected to grow nearly five times to 57 TWh by 2030. Google, Microsoft, and Amazon have collectively committed over $65 Billion in investments, with major facilities anchored in Mumbai, Hyderabad, Bangalore, Delhi NCR, and Chennai. India's national grid recently crossed 500 GW of installed capacity, a unified infrastructure advantage the government is actively marketing to hyperscalers.

India is also positioning itself as a rule-setter, not just a builder. In February 2026, MeitY's AI Impact Summit concluded with 91 nations signing the New Delhi AI Declaration, which explicitly calls for energy-efficient AI infrastructure as a foundational principle of responsible AI development.

And then there is the security dimension. The Iran strikes on the Gulf infrastructure are now likely to prompt Western hyperscalers to reassess the risks of concentrating AI infrastructure in geopolitically volatile regions. India's relative advantage here is structural, as a robust democratic framework, civilian oversight of its military and intelligence services, and a foreign policy doctrine of strategic autonomy. This helps the country maintain productive working relationships with the US, Russia, the EU, China, and the Gulf simultaneously. For corporations making 20-year infrastructure commitments, that combination of stability, scale, and strategic positioning is increasingly difficult to ignore.

FINAL INSIGHT

We are watching AI trigger the first major structural transformation of global electricity systems in decades. After nearly 20 years of flat demand in developed countries, electricity consumption is climbing again and not because of population growth or new industries, but because of software. The implications cascade outward: grid investment, consumer bills, nuclear revival, geopolitical contests, and the weaponisation of AI supply chains.

Electricity is now becoming a primary constraint in the development of AI systems and processes. As models scale, inference expands, and infrastructure concentrates, the limiting factor is shifting from compute availability to power availability. The next phase of the AI race will not be decided solely by who builds better models, but by who can generate, secure, and sustain the energy required to run them.

NVIDIA, AMD, Google, et al., will continue manufacturing SotA chips. We will keep seeing energy optimized AI tools and software. But without electricity (and water), the whole AI industry might just come to a standstill. As much as we need computer/data scientists, we also need geographers, electrical engineers, and diplomats, among other unconventional professionals.

It is now clear that the next frontier in AI is not an algorithm, but a power plant.

Sources and further reading: